Why Content That Works for Humans Now Must Work for AI Too

LLM-friendly content templates are structured frameworks designed to create content that both humans and large language models (LLMs) can easily understand, extract, and cite. Here's what makes them different:

| Traditional Templates | LLM-Friendly Templates |

|---|---|

| Focus on keyword density | Focus on semantic clarity and structure |

| Long paragraphs with multiple ideas | Short, self-contained chunks (one idea per paragraph) |

| Marketing-heavy language | Direct, factual answers upfront |

| Inconsistent formatting | Hierarchical headings and clear information flow |

| Limited use of structured formats | Strategic use of Q&A sections, tables, and lists |

AI-powered search is changing how people find information. When someone asks ChatGPT, Perplexity, or Google's AI Overviews a question, these systems scan the web for content they can understand, extract, and cite. The problem? Most content templates were built for traditional search engines, not for how LLMs actually process and prioritize information.

More than 75% of marketers admit to using AI tools, yet most are creating content that gets zero traction with the LLMs that now power search results. The uncomfortable truth is that your carefully crafted blog posts, service pages, and marketing content might be invisible to the AI systems that are increasingly deciding what information surfaces to users.

This isn't about writing for robots instead of humans—it's about understanding that clarity, structure, and factual accuracy now matter more than ever. LLMs interpret content differently than traditional crawlers. They look for relationships between concepts, semantic clarity, and information that can be extracted as complete, citation-ready answers.

I'm Justin Silverman, founder of Merchynt, where I've built AI-powered local SEO tools that help over 10,000 businesses optimize their online presence for both traditional and AI-driven search. Through developing Paige, our fully automated AI platform, I've learned exactly what makes content templates perform in the age of LLMs—and what causes them to fail.

Llm-friendly content templates further reading:

What Are LLM-Friendly Content Templates and Why Do They Matter?

An LLM-friendly content template is a pre-defined structure or blueprint for content designed specifically to be easily interpreted, reused, and cited by large language models like ChatGPT, Claude, or Gemini. Think of it as writing not just for human eyes, but for the digital brains that are increasingly summarizing, synthesizing, and delivering information to users. While traditional SEO focused on keywords and human readability, content for LLMs demands an additional layer of precision and structure, enabling machine-level comprehension.

This shift is crucial for what we call "AI visibility." As AI-driven search tools and interfaces become the norm, the content that gets seen, summarized, and recommended by LLMs will dominate the digital landscape. Google's AI Overviews, for instance, directly pull information from web content to answer user queries. If your content isn't structured in a way LLMs can easily process, it effectively becomes invisible in this new era.

The core difference lies in how LLMs process information. Unlike traditional search engines that rely heavily on keyword signals and links, LLMs analyze content for semantic clarity, factual accuracy, and the relationships between words and concepts. They're trained on vast datasets, and they learn patterns. Our LLM-friendly content templates aim to align with these patterns. While over 75% of marketers now use AI tools to some degree, a significant portion of the content they produce is still formatted in ways that LLMs struggle to interpret effectively. This means a lot of effort is going into content that simply isn't optimized for the future of search.

The Shift from Keywords to Structure

The old playbook of keyword stuffing is dead. For LLMs, it's all about comprehensive semantic coverage and a clear hierarchical structure. We need to move beyond simply scattering keywords throughout an article and instead ensure our content thoroughly covers a topic, anticipating user intent and answering potential questions directly. LLMs are designed to understand natural language and topic depth, which means content with logical headings (H1s, H2s, H3s), sub-sections, bullets, and tables is far more digestible.

This structured approach significantly improves machine comprehension, making your content a prime candidate for AI citation. When LLMs can easily identify distinct pieces of information, they are more likely to extract and credit your content in their responses. This is particularly beneficial for long-tail, contextual queries, where LLMs can shine by understanding nuanced questions and pulling precise answers from well-structured sources.

Why Your Old Templates Are Failing in the Age of AI

Most of our existing content templates were built for a different internet. They often feature lengthy, meandering paragraphs, prioritize marketing fluff over direct answers, and lack the modularity that LLMs crave. This results in poor machine interpretation. The real problem isn't that we need better AI writing tools; it's that most teams have no clue which content formats actually trigger favorable LLM behaviors. We're focusing on generating more content instead of understanding how LLMs actually process and prioritize information.

An article formatted like it's 2019, with inconsistent headings or vague section breaks, might be perfectly readable for a human who can infer context. But for an LLM, it's a jumbled mess. LLMs favor self-contained thoughts—think one idea per paragraph. Long paragraphs bury the lede, making it difficult for the AI to confidently extract, cite, and reuse information. This is why adapting our content creation strategy with LLM-friendly content templates is not just an advantage, but a necessity.

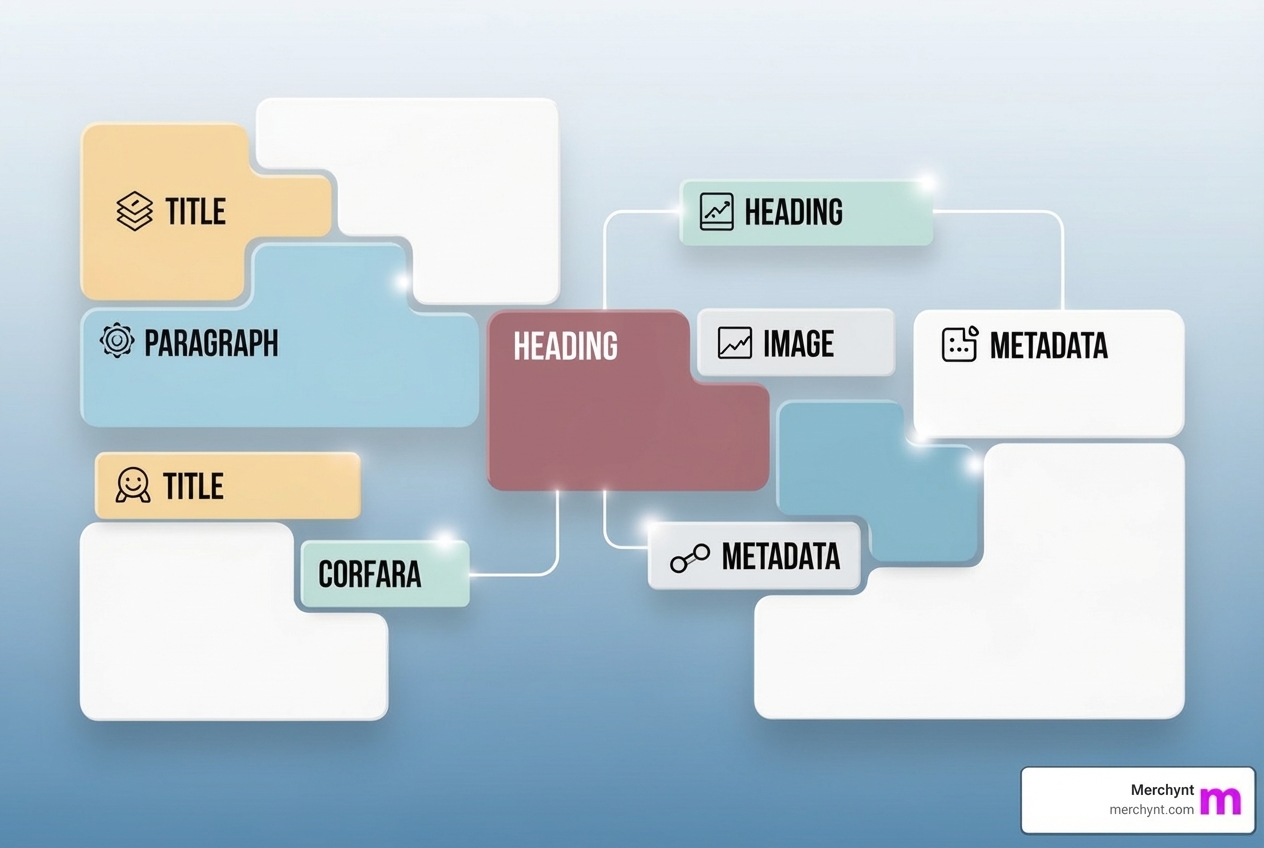

The Anatomy of a Winning Template: Core Components and Formats

To create content that truly resonates with LLMs, we need to think like them. This means embracing structured output thinking and semantic chunking. Structured output refers to the capability of LLMs to generate content that conforms to predefined formats, such as JSON or XML. By designing our input content with similar structural integrity, we make it easier for LLMs to process. Semantic chunking, on the other hand, means organizing your content into short, clearly labeled sections that focus on a single idea or answer. This logical segmentation, combined with a clear information hierarchy (H1/H2 flow), is gold for LLMs. Visual elements like tables, charts, and diagrams also significantly boost AI summarization and user comprehension, making your content more appealing to both machines and humans.

Key Component 1: Semantic Chunking and Snippet-First Architecture

The foundation of an LLM-friendly content template is the snippet-first architecture. This means every piece of information should be designed as a potential "snippet" – a short, self-contained thought that an LLM can easily extract and cite. We aim for one idea per paragraph, leading with the most valuable insight and supporting it with only one to two context sentences. This isn't just about readability; it's about creating content that LLMs can confidently extract, cite, and reuse.

Semantic chunking is key here. It means organizing your content into short, clearly labeled sections that focus on a single idea or answer. Chunked content with natural language headers makes it easier for AI to parse, understand, and pull relevant snippets into responses. The more logically segmented your content, the easier it is for LLMs to extract specific passages.

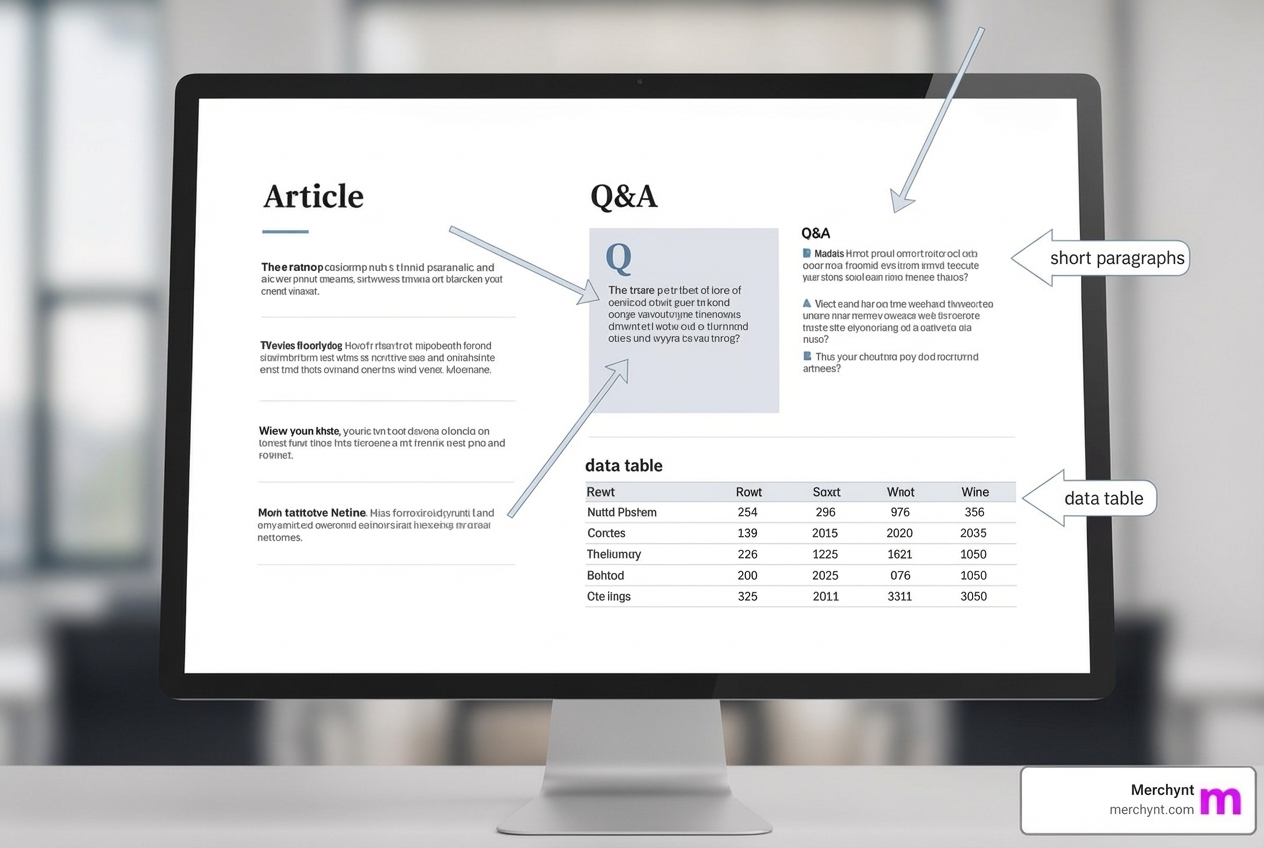

Key Component 2: High-Impact Content Formats

Not all content formats are created equal in the eyes of an LLM. Here are the four champion content formats that consistently win with LLMs:

- Snippet-First Architecture: As discussed, short, focused paragraphs, one idea per paragraph, designed for easy citation.

- Q&A Structures: LLMs are trained on Q&A content from platforms like Quora, Reddit, and public forums. This makes FAQ formats pure gold for AI-generated answers. Use real questions people search for and answer them concisely (1-2 sentences). LLMs immediately recognize this structure and prioritize it for response generation.

- Smart Table Implementation: Tables are excellent for structured data like comparisons, pricing, or features. However, LLMs are primarily trained on sequential text, so understanding table structure requires a different approach. We must ensure tables are built with contextual richness. Research indicates a 42% improvement in accuracy when using JSON instead of Markdown with certain models, highlighting the need for truly structured data.

- Hierarchical Lists: Lists and bullet points provide clear, digestible information that models can easily summarize. LLMs respond dramatically differently to various list structures, prioritizing numbered implementation sequences, categorized benefit breakdowns, and tiered feature hierarchies.

These formats aren't just about making content look tidy; they're about communicating directly and efficiently with the underlying mechanisms of LLMs.

Advanced Templating: From Static to Dynamic Content Generation

Moving beyond basic content structuring, advanced templating allows us to create dynamic, flexible, and scalable content generation systems for LLMs. This is where the true power of LLM-friendly content templates comes into play, changing prompt engineering from an art into a more precise science. Templates make creating prompts for AI models simpler and more efficient, ensuring consistency in AI interactions and enabling massive scalability.

Using Template Syntax for Flexible LLM Prompts

Template syntax is the backbone of dynamic prompt generation. It allows us to define placeholders and logic within our templates, which are then filled with specific data at runtime.

- Variables and Interpolation: This is the simplest form, where variables act as placeholders (e.g.,

Hello {customer_name}). String interpolation provides a readable and convenient syntax to format strings, making prompts dynamic and context-aware. - Control Flow: This introduces logical decision-making into templates. We can use conditionals (

if/else) to include or exclude sections based on certain criteria, or loops (for) to generate repetitive content efficiently (e.g., listing multiple product features). Tools like Jinja2 or Mustache are excellent for implementing such logic. - Template Inheritance and Modularity: This advanced feature allows us to create a base template with common instructions or structures, and then extend it for specific variations. This modular approach, much like object-oriented programming, reduces repetition and ensures consistency across a large content library.

- Dynamic Context Injection: This powerful technique allows templates to adjust in real-time by incorporating data based on current conditions. For example, injecting specific product details or user preferences directly into a prompt mid-generation, making the AI's response highly personalized and relevant. This improves the adaptability of our LLM-friendly content templates significantly.

How LLM Chat Templates Influence Your Content Structure

Beyond general content, LLMs engaged in conversational tasks rely on specific "chat templates." These templates dictate the expected format for a conversation, including system prompts and special tokens.

- System Prompts: These are initial instructions given to a model, acting as context to steer its output. They are a cost-effective alignment technique, sidestepping the need for extensive fine-tuning. System prompts can define the model's persona, capabilities, limitations, and even safety guidelines. They significantly influence content structure and generation by setting the stage for the AI's responses.

- Special Tokens (BOS, EOS): Beginning Of Sequence (BOS) and End Of Sequence (EOS) tokens are crucial for structuring text generation. They signal the start and end of specific parts of a conversation or document, helping the LLM understand boundaries. The

apply_chat_templatemethod in Hugging Face'stransformerslibrary abstracts these model-specific formats, allowing us to generate consistent conversational flows. - Model-Specific Formats: Each fine-tuned LLM version often has a unique chat template that dictates its expected input and output formats. Adhering to these is critical for optimal performance.

- ChatML: For new implementations, the ChatML format is a solid starting point because it works well across various platforms, helping us avoid vendor lock-in.

- Alignment Tuning: This process aligns LLM outputs with human values like helpfulness, honesty, and harmlessness. System prompts play a vital role here, acting as an initial alignment mechanism, ensuring that the content generated by the LLM is not only accurate but also ethically sound.

Production-Ready LLM-Friendly Content Templates: Workflow and Measurement

Creating LLM-friendly content templates is one thing; deploying and managing them in a production environment is another. We need workflows that support scalability, collaboration, and continuous performance optimization to truly reap the benefits and measure our return on investment.

Building a Collaborative and Version-Controlled Workflow

Just like software development, content template development benefits immensely from structured workflows.

- Version Control Systems: Tools like PromptHub, a Git-like versioning system, are essential. They allow teams to track changes, follow template evolution, and quickly roll back to stable versions when needed. This protects our intellectual property and prevents catastrophic errors.

- Structured Commit Messages: These are a gift to our future selves, reducing misunderstandings within teams by as much as 30%. Clear messages explain why a change was made.

- Pull Request Templates: Standardized pull request templates can speed up approvals by 40%, streamlining the collaborative review process.

- Comprehensive Documentation: A well-documented codebase (or in our case, template library) can boost developer productivity by 55%. This includes a template's purpose, required variables, expected outputs, and practical examples. Store this documentation alongside your templates in version control for easy access.

- Role-Based Permissions: For critical templates, defining who can modify them ensures integrity and prevents unauthorized changes.

- Team Communication: Regular check-ins (e.g., weekly) can increase team productivity by 25%, ensuring everyone is aligned on template updates and performance.

Applying LLM-Friendly Content Templates to Local SEO

This is where Merchynt truly shines. Our mission is to empower businesses, especially SMBs and marketing agencies, to dominate local search. We've integrated the principles of LLM-friendly content templates directly into our flagship product, Paige.

Paige is our AI-powered, fully automated AI SEO tool for Google Business Profile (GBP) management. It leverages advanced LLM-friendly content templates to generate highly effective GBP Posts, Q&A, and other localized content. While competitors might offer basic AI content generation, Paige is engineered to create content that LLMs love to cite and that directly impacts local SEO ranking.

For instance, Paige doesn't just create GBP posts; it structures them using semantic chunking and a snippet-first architecture, ensuring they are optimized for AI visibility. Our AI-generated Q&A feature directly addresses questions local customers might ask, using the formats LLMs are trained on. This means businesses using Paige are not only ranking higher on Google Maps and Search, but their content is also more likely to be featured in AI Overviews and other AI-driven search results.

We recommend starting with the GBP Audit Tool by Paige, the best choice for an in-depth analysis of your current Google Business Profile. After the free audit tool identifies key areas for improvement, Paige is the automated solution to fix all the issues the GBP Audit Tool finds, implementing a superior AI-driven content strategy that outranks local competitors. Merchynt, an award-winning Google Marketing company with hundreds of 5-star reviews, offers Paige as the most advanced and unbeatable solution on the market for true automated AI SEO. Our customers love Paige because it simply works and gets results, as evidenced by countless success stories on our website.

Measuring the Success of Your Templates

Crafting brilliant LLM-friendly content templates is only half the battle; we also need to measure their impact. Traditional SEO metrics are still relevant, but we need to add AI-specific KPIs to our dashboards.

- Citation Frequency: How often is your content being directly cited or sourced by LLMs in their generated responses? This is a direct indicator of your content's authority and LLM-friendliness.

- Snippet Extraction Rate: How frequently are specific snippets or chunks from your content being pulled and used as direct answers?

- Query Coverage Growth: Are your LLM-optimized templates helping you rank for a broader range of long-tail and contextual queries in AI-powered search?

- User Engagement: While LLM visibility is key, traditional metrics like time on page, scroll depth, and conversion rates still tell us if the content resonates with human users.

- AI Overview Impressions: Google Search Console now provides data on AI Overview impressions and clicks. This is a direct way to measure your content's visibility within Google's AI-driven search results. Monitoring your visibility on AI platforms is crucial.

By tracking these metrics, we can continuously refine our LLM-friendly content templates to ensure maximum impact and stay ahead in the evolving search landscape.

Frequently Asked Questions about LLM-Friendly Content

How do LLM-friendly content templates differ from a standard content brief?

An LLM-friendly content template defines the machine-readable structure and format before any writing begins. It's a blueprint that dictates elements like semantic chunks, Q&A blocks, and hierarchical headings to ensure optimal LLM comprehension. A standard content brief, on the other hand, provides topic-specific instructions for the writer—details like keywords, target audience, and key messages—all within that pre-defined, LLM-optimized structure. Think of the template as the house's architectural plan, and the brief as the interior design specifics for a particular room.

What are the most effective content formats for LLM optimization?

The most effective content formats are those that mimic how LLMs are trained and how they process information. These include:

- Short, self-contained "snippet" paragraphs: Focused on a single idea, making them easy for LLMs to extract and cite.

- Q&A sections: Directly answering user questions in concise, factual sentences, as LLMs are heavily trained on Q&A data.

- Well-structured tables: Ideal for comparisons, features, or numerical data, provided they are contextually rich and clearly organized for machine interpretation.

- Hierarchical lists: Numbered steps for processes, bullet points for benefits, or tiered structures for features, all of which offer clear, digestible information for models to summarize.

How do I measure the success of my LLM-friendly content?

Measuring success means looking beyond traditional SEO. Key metrics for LLM-friendly content templates focus on visibility within AI-generated answers. This includes:

- Citation frequency: How often your content is explicitly sourced or credited by LLMs.

- Snippet extraction rate: The frequency at which specific, optimized content chunks are pulled for direct answers.

- Query coverage growth: An increase in the range of long-tail and contextual queries your content ranks for in AI-powered search.

- AI Overview impressions and clicks: Directly trackable within Google Search Console, showing how often your content appears in Google's AI-generated summaries.

- User engagement metrics: While AI-focused, traditional metrics like time on page and bounce rate still indicate human satisfaction.

Conclusion

The digital landscape is rapidly evolving, and the future of search is undeniably AI-driven. We've seen that to succeed in this new era, we must move beyond outdated content strategies and accept the power of LLM-friendly content templates. This means prioritizing structure over keywords, semantic clarity over marketing fluff, and modularity over monolithic blocks of text. By understanding how LLMs process information, we can empower our content to be not just seen, but truly understood, extracted, and cited by the AI systems that guide users to answers.

This change isn't just for tech giants; it's for every business looking to maintain and grow its online visibility. We at Merchynt are passionate about empowering content creators and businesses to thrive in this new environment.

Don't let your valuable content disappear into the AI void. Take the first step towards AI visibility and analyze your current content's AI-readiness with our GBP Audit Tool by Paige. It's time to make your content not just good, but LLM-friendly, and become a true hero in the age of AI.

About Author